Introduction

Web applications are updated on a frequent basis in today's fast-paced development settings, and agile, integrated methodologies like DevOps are swiftly becoming the norm. To design, test, and update diverse apps and services, development teams deploy highly automated methods, frequently relying largely on ready-made application frameworks and open source libraries.

Your development, operations, and security teams are under constant pressure to deliver more with less in a constantly evolving threat environment. Automated technologies integrated within the SDLC are vital to their success, thus they must be reliable, efficient, and trustworthy. These programs may create false positives. So, how to assure that this is taken care of?

Here are six methods in which the impact of false positives be mitigated.

.png)

With the current growth in tool-based scans across the security industry, detecting the true issues and reducing false positives has never been more difficult. Fortify, AppScan, and FindBugs, for example, are tools and plugins that scan static and dynamic code. The faults are recognized using a common set of default rules. Security analysts, on the other hand, spend more time than usual triaging and filtering out false positives.

False positives could be demoralizing for the individual examining the tool's results. They may miss the underlying concerns in the thick of all the fluff. Instead of using default packs, you might apply a tailored rule set to acquire more precise results.

With a rising user base, most of the most recent technologies have greater adoption potential. This suggests that the tool's design makes it easier for users to adapt and use it in a more efficient manner.

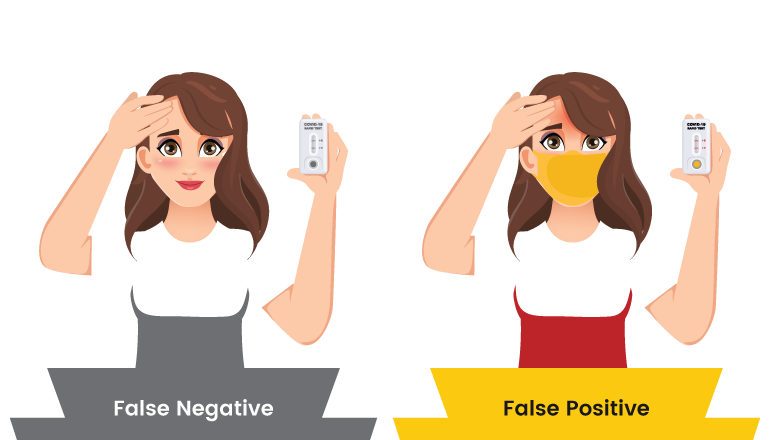

False positives and false negatives:

There are two sorts of errors to be cautious of when using a vulnerability scanning tool or another form of vulnerability identification:

Type I error: When a vulnerability is present but not recognized, it is referred to as a false negative.

Type II error: a result that shows the presence of a vulnerability when it is not.This produces a lot of noise and needs remedial work that isn't needed.

The more severe inaccuracy is the false negative, which gives a false sense of security. Although it is beyond the scope of this article to cover how to spot false negatives, our general advice is to apply a variety of tools and approaches when identifying vulnerabilities, and not to trust that a clean result from a tool or tester suggests you are entirely secure.

Advantages:

- Identify framework/IDE defaults

- Enterprise-oriented commands

- Provide consistent advice

Identify framework/IDE defaults:

A default rule set is frequently developed in order to identify trivial concerns (low hanging fruit, so to say) (low hanging fruit, so to say). It also identifies other concerns that may be generic in nature across many platforms and frameworks. Having said that, this provides door to a lot of false positives during the scanning process. These false positives could appear like legitimate issues, but in practicality are not.

For example, a basic criterion could be to look for the keyword "TODO" in all code files. New methods are routinely annotated with this kind of comments in modern frameworks and IDEs. This allows the developer to rapidly refer back and add context.

As a result, numerous instances of the TODO keyword emerge in a tool-based evaluation. These instances may or may not be relevant or result in an attack surface.

Customizing the rules is one method to reduce noise in the review findings. This guarantees that the tool only returns results for rules that are relevant to the user.

Enterprise-oriented commands:

Most firms have their own in-house frameworks or libraries that handle the most frequent activities.

Input validation, database connectivity, and SQL statement creation are among them. In some cases, the tool's default rules may or may not be appropriate. They may require some tuning to discover the right faults while avoiding false positives.

Provide consistent advice:

A few hundred engineers working on multiple platforms and languages can be expected in every large or medium-sized firm. Customization may not be restricted to scanning criteria, but may also involve the help offered to end-users by the data acquired.

Internal references, for example, are useful. They make it easy for other developers to adapt the source code by keeping it uniform across the company.

Elements to consider:

Developers and security analysts should cooperate before going through customization to identify potential areas of concern and rules to change.

Once customized, analysts should maintain a careful check on the process to determine what influences it has on tool usage and how to get the most out of a specific tool.

The regulations should be examined and upgraded on a regular basis. Ensure that the regulations reflect the most recent technical and framework changes.

Devsecops practice to minimize the false positives:

When you dig further into security technology, you'll discover a theme in the problems that arise: false positives.

What if there was a way you could set up your DevSecOps to implicitly detect these for what they are - potential false positives - and not flag them during the DevSecOps process? This would mean the proper security professionals or developer might look at them afterward and raise an issue if they’re legitimate.

Think of those typical header issues that are reported or that issue type from that tool you generally disregard because it’s most likely a false positive. Preempting them as potential non-issues ensures that they are addressed but do not slow down development cycles.

The Platform utilizes several of the above approaches to successfully limit the scope of concerns identified during DevSecOps. It guarantees development teams are alerted to the key issues while other, low-priority things are addressed in the background. Dev cycles are frictionless unless there’s a serious issue.

What's the best part? This also helps to avoid the dreaded misalignment of development and security teams by allowing them to interact because all issues from each tool are captured and triaged in a single, central area.

Taking hundreds of concerns and automatically narrowing them down also saves a lot of time, for everyone concerned. With the added advantage that when issues are fixed, they’re automatically deleted from the list when the DevSecOps tools stop reporting them.

Challenges:

Customization is a pretty hard procedure.It demands highly experienced technical assistance, which is frequently difficult to come by and expensive to keep. Despite the fact that the tools come with manuals and instructions, writing or modifying the rules is sometimes a time-consuming task. Even with all of the recommendations, the tool selects the customization procedure.

Visual step-by-step tutorial wizards are available on various systems. Others allow you to upload Excel files. Some may even demand complex API access that necessitates significant coding abilities

It's also worth noting that modification experts must have a solid understanding of the frameworks and libraries that the organization employs. It's not only about having a functioning knowledge of the tool.

The expert should also have a full grasp of prevalent problems and false positives, as well as the ability to examine them in the context of the company's libraries and APIs.

To summarise,false positive reduction has been a battle for years, with a range of strategies applied. Customizing the tool's rules to line with organizational policies is, nevertheless, one of the most successful and efficient options in terms of time and expenditure. The deciding criteria are primarily dictated by the tools you employ and the level of customization your firm is ready to do.

Are you ready with a customized tool?